Meta has unveiled the latest version of its Meta Training and Inference Accelerator (MTIA), marking a significant development in its proprietary AI infrastructure. This updated MTIA is designed to support an extensive array of AI-driven applications, including generative AI products, recommendation systems, and advanced AI research. The need for this advancement comes as Meta anticipates a rise in computational demands due to increasing AI model sophistication.

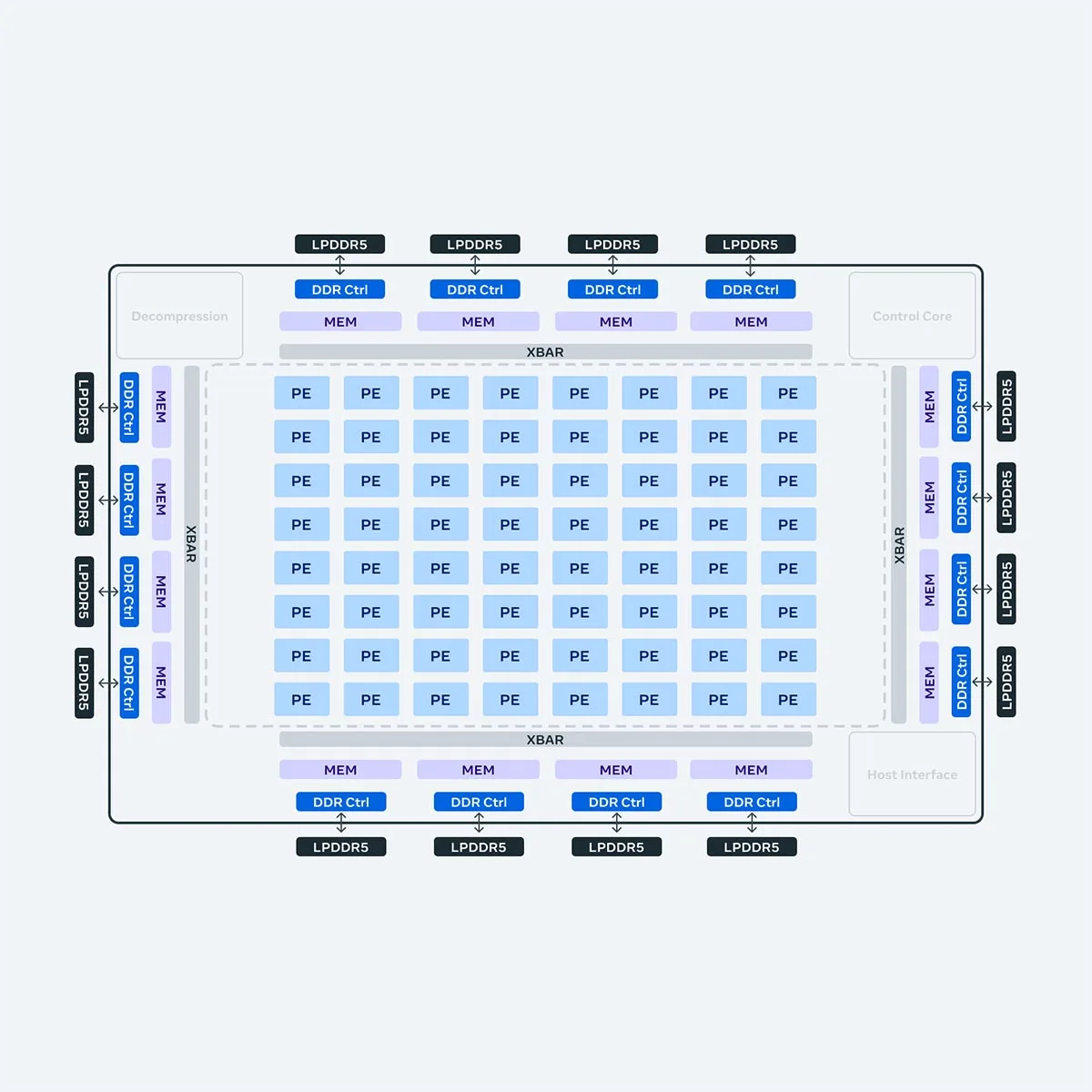

Key improvements in the new MTIA include a doubling of both compute and memory bandwidth capacities compared to its first-generation predecessor. This enhancement enables the accelerator to handle more complex and memory-intensive AI operations more efficiently. The next-generation MTIA’s enhanced specifications include:

- Compute Performance: Dense compute performance has increased 3.5 times, and sparse compute performance has seen a 7 times improvement.

- Memory Upgrades: Local memory per processing element (PE) has increased from 128 KB to 384 KB, with on-chip memory growing from 128 MB to 256 MB, and off-chip LPDDR5 memory doubling from 64 GB to 128 GB.

- Bandwidth Improvements: The bandwidth for local memory per PE has surged from 400 GB/s to 1 TB/s, with on-chip memory bandwidth increasing from 800 GB/s to 2.7 TB/s.

These advancements are part of Meta’s strategic shift towards reducing its dependency on third-party hardware providers like NVIDIA, aiming to cultivate a more self-reliant and optimized hardware ecosystem. This move aligns with Meta’s broader goal to control and integrate its hardware and software stack more tightly, facilitating the creation of a scalable and efficient infrastructure capable of supporting its vast and diverse AI applications.

| Specification | First Gen MTIA | Next Gen MTIA |

|---|---|---|

| Technology | TSMC 7nm | TSMC 5nm |

| Frequency | 800MHz | 1.35GHz |

| Instances | 1.12B gates, 65M flops | 2.35B gates, 103M flops |

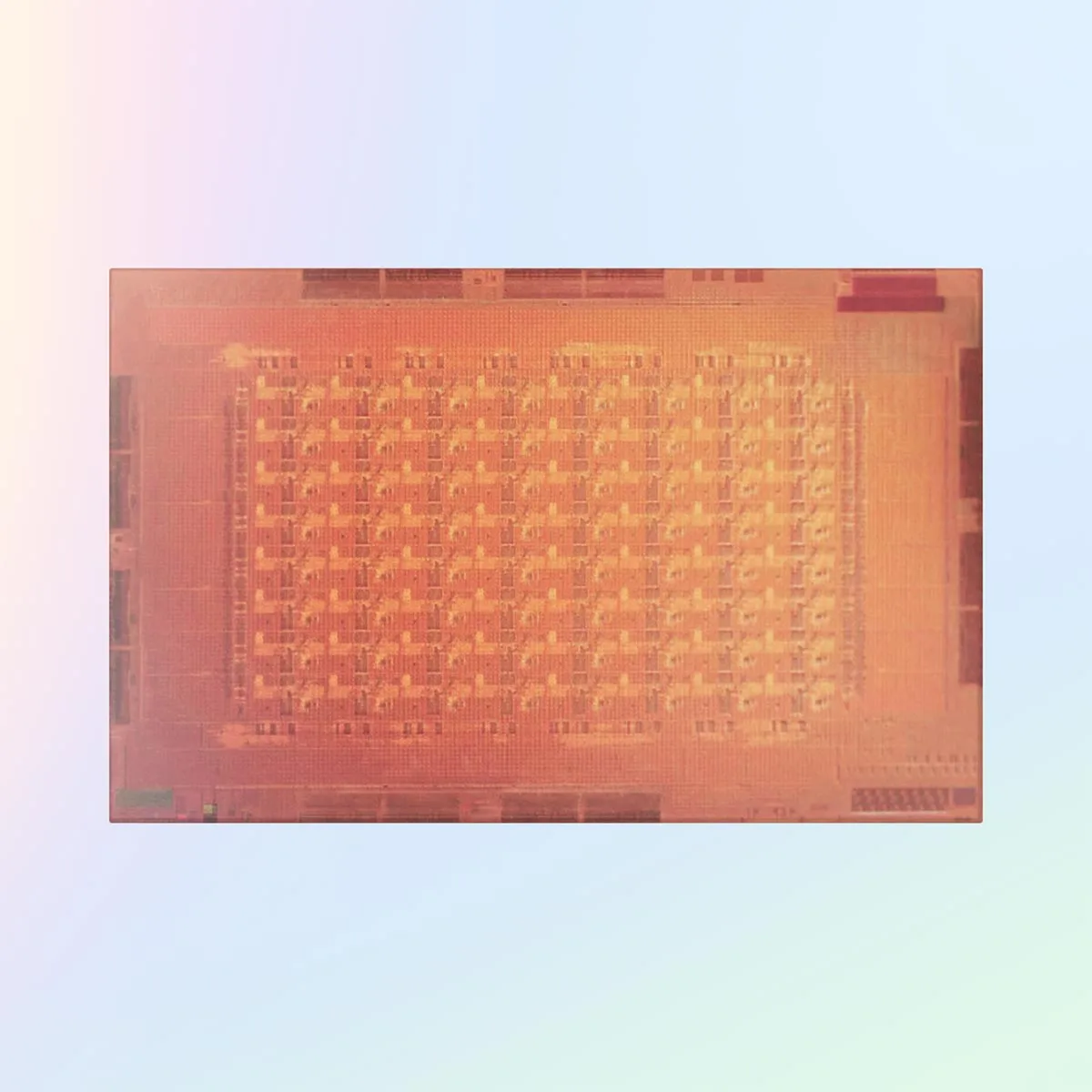

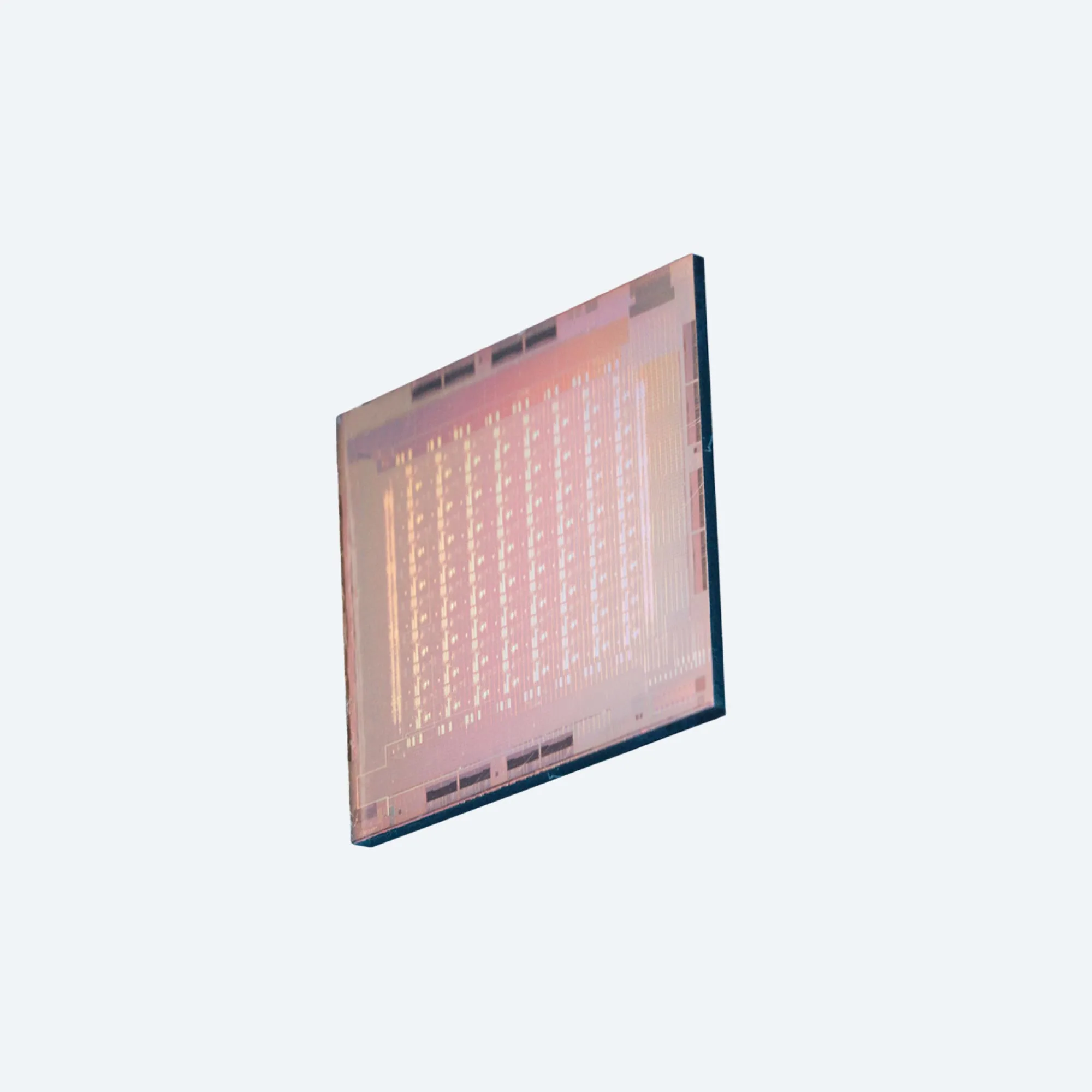

| Area | 19.34mm x 19.1mm, 373mm² | 25.6mm x 16.4mm, 421mm² |

| Package | 43mm x 43mm | 50mm x 40mm |

| Voltage | 0.67V logic, 0.75V memory | 0.85V |

| TDP | 25W | 90W |

| Host Connection | 8x PCIe Gen4 (16 GB/s) | 8x PCIe Gen5 (32 GB/s) |

| GEMM TOPS | 102.4 TFLOPS/s (INT8) 51.2 TFLOPS/s (FP16/BF16) |

708 TFLOPS/s (INT8) (sparsity) 354 TFLOPS/s (INT8) 354 TFLOPS/s (FP16/BF16) (sparsity) 177 TFLOPS/s (FP16/BF16) |

| SIMD TOPS | Vector core: 3.2 TFLOPS/s (INT8), 1.6 TFLOPS/s (FP16/BF16), 0.8 TFLOPS/s (FP32) SIMD: 3.2 TFLOPS/s (INT8/FP16/BF16), 1.6 TFLOPS/s (FP32) |

Vector core: 11.06 TFLOPS/s (INT8), 5.53 TFLOPS/s (FP16/BF16), 2.76 TFLOPS/s (FP32) SIMD: 5.53 TFLOPS/s (INT8/FP16/BF16), 2.76 TFLOPS/s (FP32) |

| Memory Capacity | Local memory: 128 KB per PE On-chip memory: 128 MB Off-chip LPDDR5: 64 GB |

Local memory: 384 KB per PE On-chip memory: 256 MB Off-chip LPDDR5: 128 GB |

| Memory Bandwidth | Local memory: 400 GB/s per PE On-chip memory: 800 GB/s Off-chip LPDDR5: 176 GB/s |

Local memory: 1 TB/s per PE On-chip memory: 2.7 TB/s Off-chip LPDDR5: 204.8 GB/s |

In conjunction with hardware upgrades, Meta has also refined the software stack associated with MTIA to enhance integration and operational efficiency. This software is designed to work seamlessly with PyTorch 2.0, incorporating features that boost developer productivity and computational performance. This comprehensive development of both hardware and software underlines Meta’s commitment to advancing its internal AI capabilities, ultimately aiming to improve the performance and efficiency of its AI deployments significantly.